Search engines use crawlers or else web robots to crawl and index your website. To many website owners, indexing of their website content is quite crucial to increase their visibility online and thereby increasing traffic within their sites. However, there are cases where you would not want your site to be indexed. These reasons can actually get you thinking of how to discourage search engines from indexing your website.

It is possible to prevent a resource or page within your site from appearing in Google search. Within this article, we will look into how to discourage and prevent search engines from indexing your website.

Table Of Contents

Reasons Why You Would Want to Block Search Engines From Indexing Your Website

How To Block Search Engines From Crawling And Indexing Your Website

- Via the default WordPress Search Engine Visibility Checkbox

- Modifying the Robots.txt file

- Password Protecting your Website

Removing a Website from Google Search

Indexing Vs Listing In Google

Indexing

Indexing is the process of collecting and downloading site content data to the search engine server and thereafter storing the data by adding it to its central database (index).

The indexing process is preceded by Crawling, whereby search engines tend to scan the web to identify any new or updated content. This content is what is in turn used in indexing.

Indexing will enable in rendering data relevant to search queries much more faster since the content is organized, as compared to searching through all content by executing multiple queries.

Listing

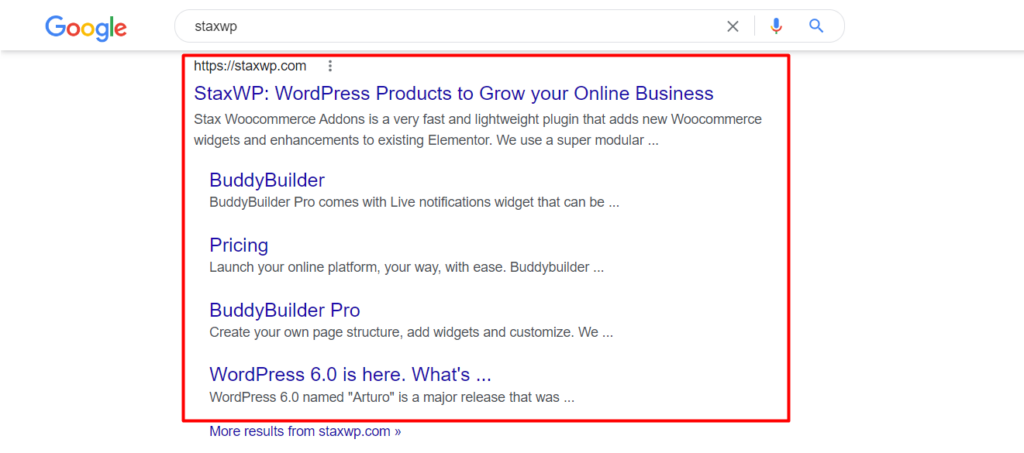

Listing mainly involves the rendering of your website in Search Engine Result Pages (SERPs).

If a website is displayed in the Search Engine Result Pages then it means that such a website is indexed. Below is a sample screenshot on this:

Indexing however does not essentially translate to mean that your website will be listed in SERPs.

It is also important to note that a website does not have to be indexed in order for it to be listed. If there are links pointing to the site domain or else ay other website content, then Google will utilize this.

Reasons Why You Would Want to Block Search Engines From Indexing Your Website

1. When Creating a development site

As a site owner, in most cases, you would want to create your website first on a development environment prior to pushing the final website to production.

However, you do not want to have your development site content being indexed by Google, which would in turn lead to competition with your production site. In this case, you would need to disable indexing within your development site in order to avoid such a scenario.

2. Private Content

If your website contains private content that you would not want accessible to search engines, you can opt to prevent such a site from being indexed.

An example of such a scenario could be if you have web pages that should only be accessible if a user has a subscription or only when a user is logged in. Such content should not be made available for indexing.

3. Hacked / Compromised Content

In a scenario whereby your site is hacked and thereby compromised, this poses a security threat to your site users and especially when it comes to Ecommerce sites.

To help in reducing such risks it would be appropriate to deindex the site or even delete it.

4. Duplicate Content

At times, you may actually have duplicate content within your website especially when it comes to Ecommerce sites whereby product pages may appear similar. However, having duplicate content within your website can actually lead to penalization by Google.

It is hence important to deindex any duplicate contents within your site in order to avoid being penalized.

5. Outdated Content

In some cases, you may have a website rendering outdated information and thereby the search results would contain this information. Such information can in turn be misleading to site visitors.

It would hence be great to discourage search engines from indexing such content in such situations.

6. Leaked Information

If content is prematurely made available to the public, then you would need discourage search engines from indexing such content or else unpublish the website. This will help in making this content inaccessible.

7. Harmful Content

In situations whereby you may have content that you can consider harmful to your website, you can consider deindexing the website. This could for example be the case if your site had been hacked and malicious information added to it.

How To Block Search Engines From Crawling And Indexing Your Website

There are several ways to consider if you want to discourage search engines from crawling and indexing your website. We will look into some of these ways in order and explore how each of them assists in accomplishing this.

Via the default WordPress Search Engine Visibility Checkbox

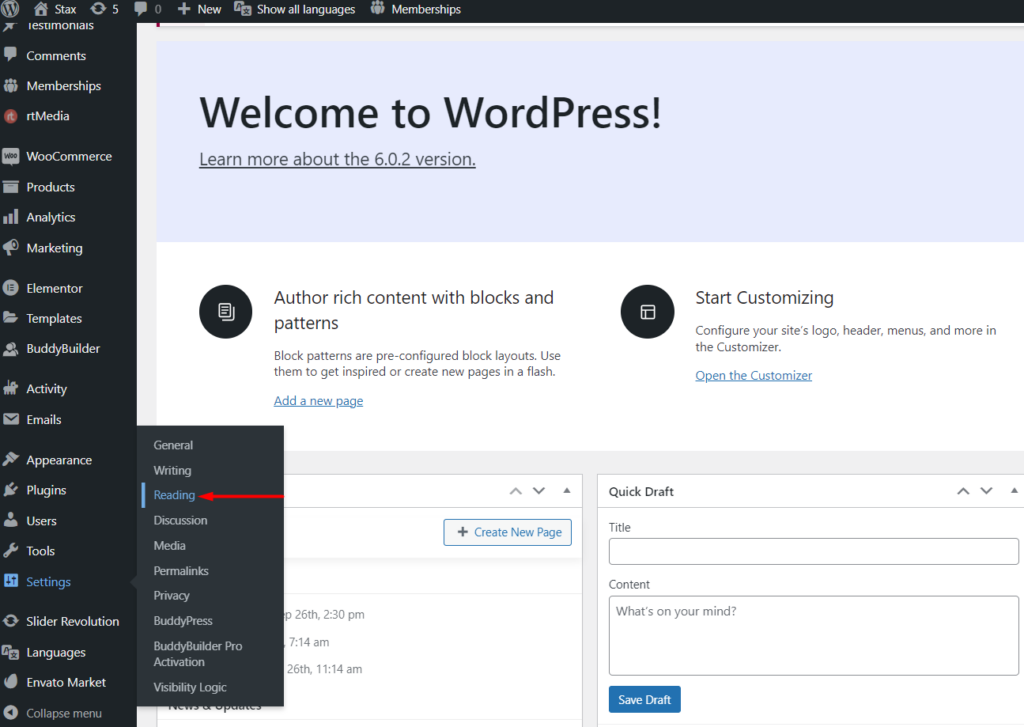

This method enables you to discourage search engines from crawling your website directly from the WordPress dashboard. In order to have it implemented, you will need to:

i) Login to your WordPress dashboard using an administrator account

ii) Navigate to the Settings > Reading section within your WordPress dashboard

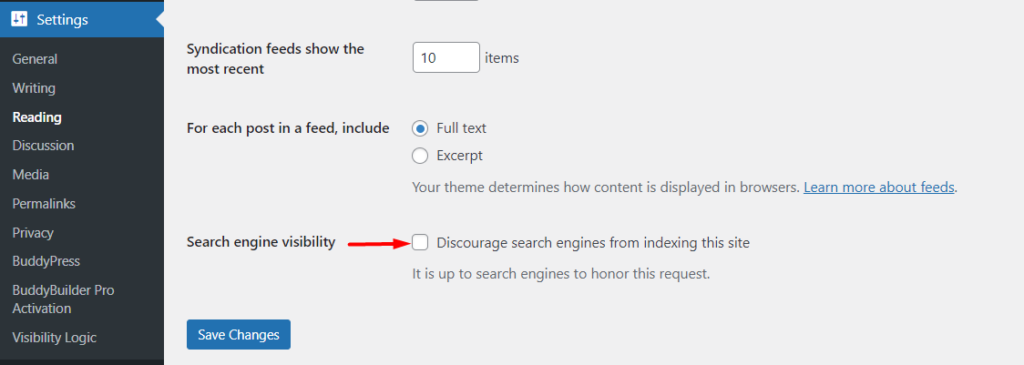

iii) Within the “Search Engine Visibility” section, enable the option “Discourage search engines from indexing this site”

iv) Save your changes

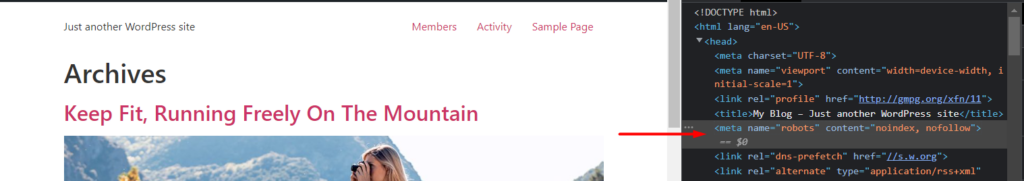

Once the above is carried out, the code below is added to your site header:

<meta name="robots" content="noindex, nofollow">

The robots.txt file is also modified to:

User-agent: *

Disallow: /This will help in discouraging search engines from indexing your website. It is however important to note that while these changes help in discouraging site engines from indexing your website, it is up to the individual search engines to honor the request or not.

Modifying the Robots.txt file(manually)

Similar to the above method, this is considered a manual approach to modifying the robots.txt file.

In order to accomplish this, you will need to:

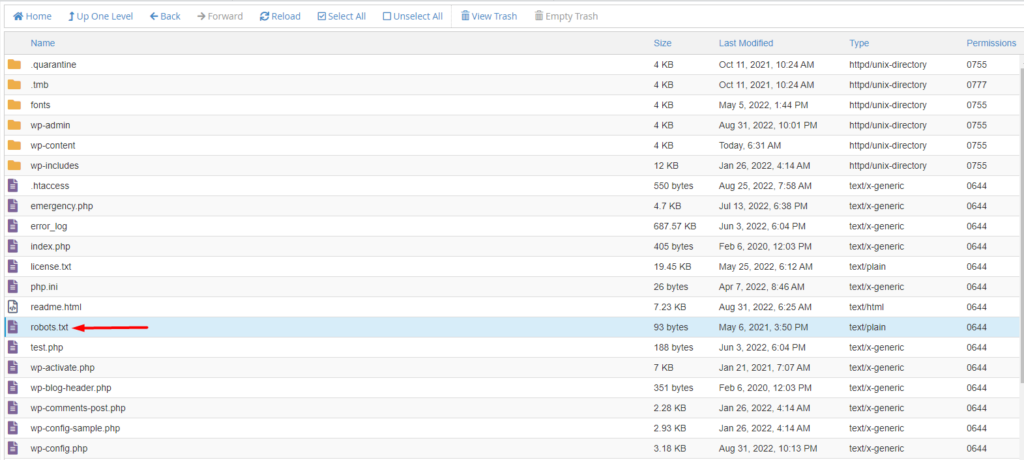

i) Access your site files via an FTP software such as Filezilla

ii) Within the root of your Website files, check for the robots.txt file. In most cases, this is within the public_html folder.

If you do not seem to trace the file, you can consider creating one.

iii) Add the following code to the file:

User agent: *

Disallow: /iv) Save your changes

You can also disallow indexing on specific pages by adding the subdirectory and the slug within the Disallow: section. An example to this would be:

User agent: *

Disallow /blog/this-is-a-link-to-our-websitePassword Protecting your Website

Search engines are not in a position to crawl password protected websites since they do not have access to them. This makes password protecting your website one of the most suitable approaches when it comes to preventing indexing of your website.

Password protection of your site can be achieved via various approaches:

i) Password Protection via your hosting control panel

ii) Using a Password protection plugin

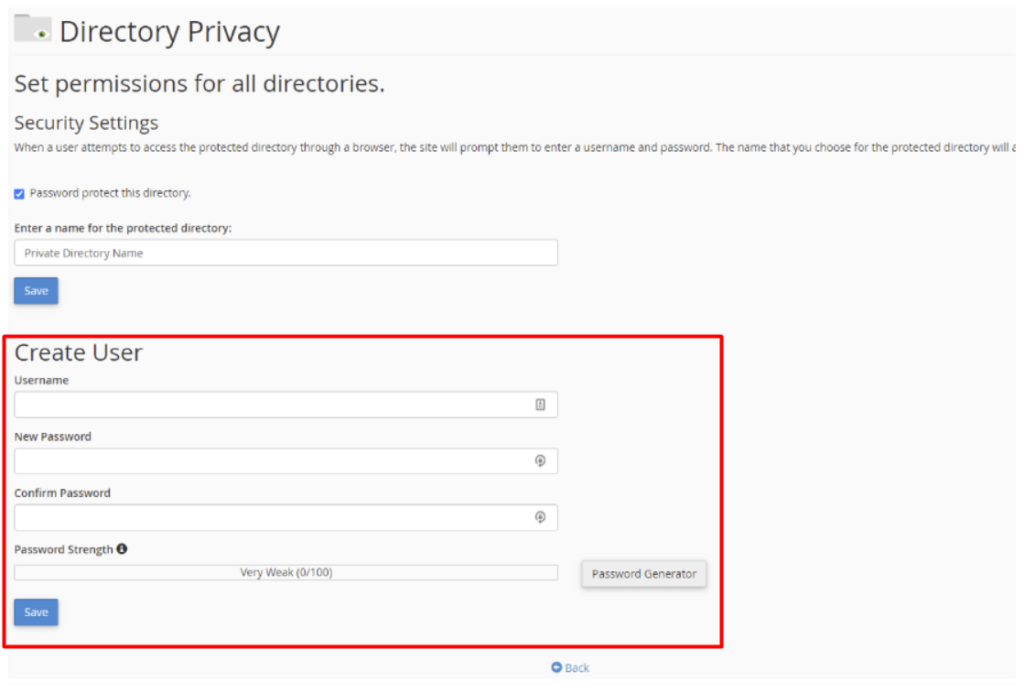

Password Protection via your hosting control panel

Various hosting control panels have different steps in implementing password protection within a website. In our case here, we will use an example of cPanel.

In order to password protect your website via cPanel, you will need to carry out the following:

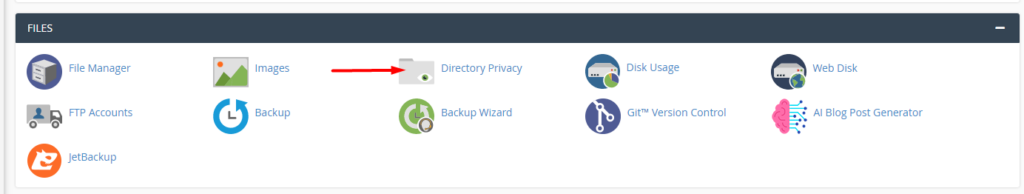

i) Login to your cPanel account

ii) Navigate to the Files section and select “Directory Privacy”

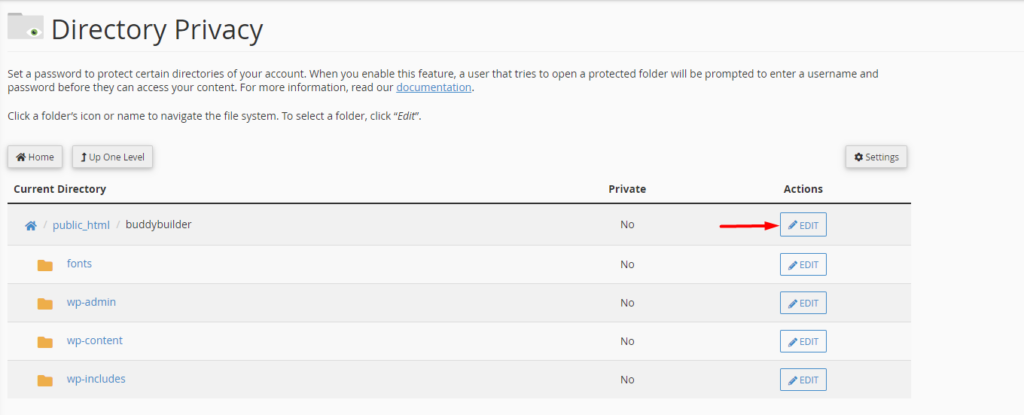

iii) Select your sites’ root directory. In our case here, this will be public_html / buddybuilder

iv) Click on the “Edit” action alongside it

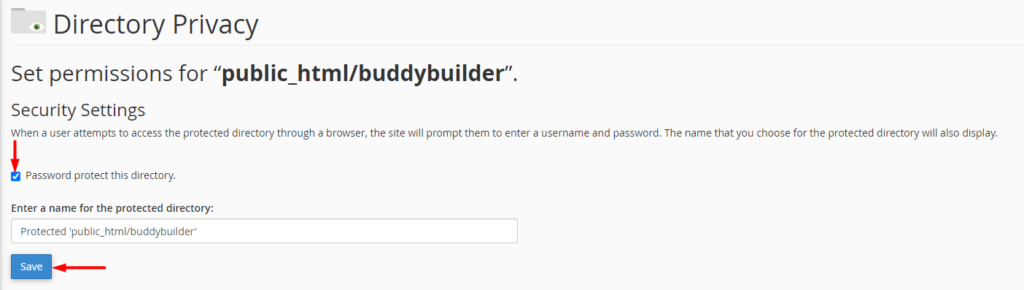

v) Enable the option “Password protect this directory” and save your changes

vi) Head back to the previous step by clicking on the “Go back” link and within the new “Create User” section, setup a new user account to be used in accessing the website.

Once this is done, search engines will not be in a position to crawl your site.

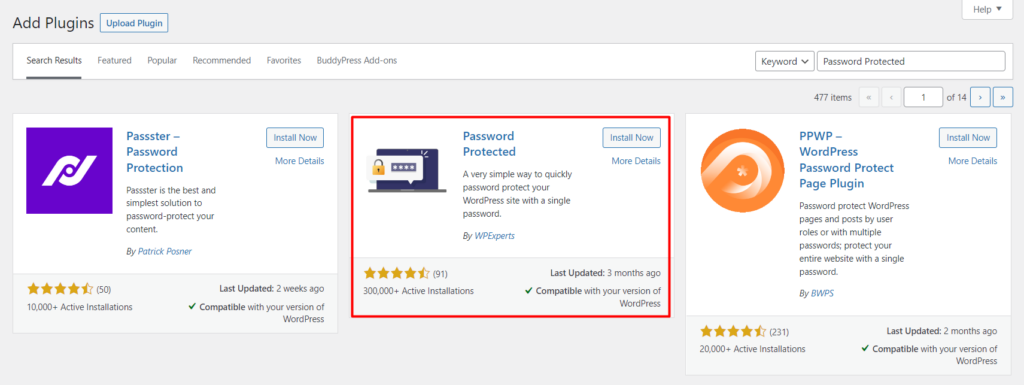

Using a Password protection plugin

In this option, you can consider using the Password Protected plugin. In order to setup the plugin, you will need to carry out the following:

i) Navigate to the Plugins > Add New section within your WordPress dashboard and search for “Password Protected”

ii) Install and activate the plugin

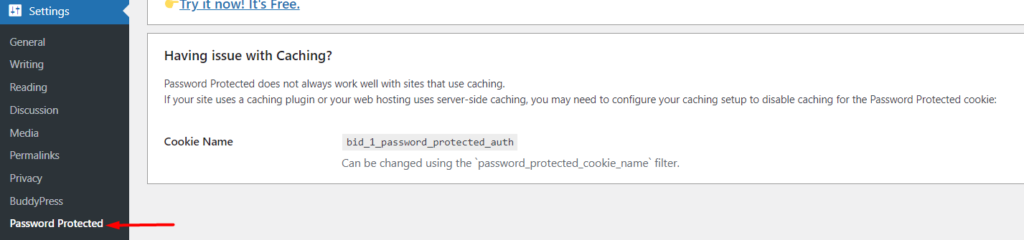

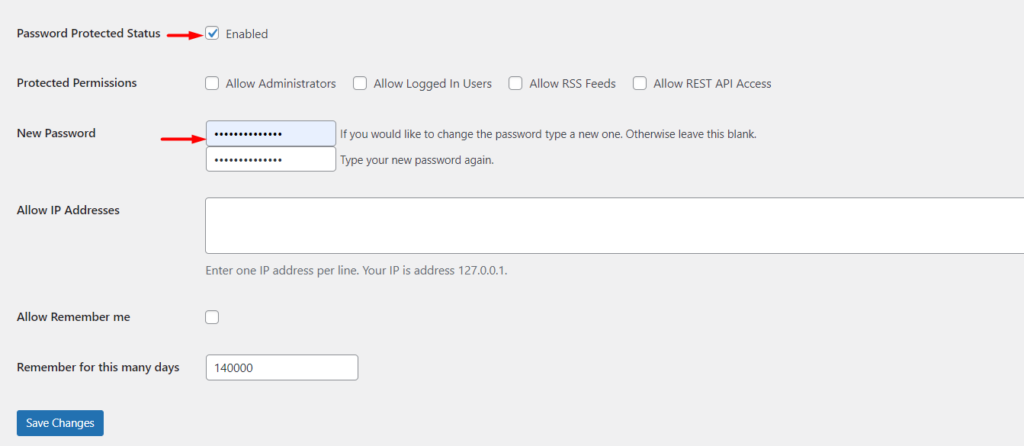

iii) Navigate to the Settings > Password Protected section within your WordPress dashboard

iv) Enable the “Password Protected Status” option and fill in your password

v) Specify the number of days that the site will remain protected

vi) Save your changes

It is important to note that in this method, once files such as images are directly accessed in the browser, the password protection is not applied and hence the image can be easily accessed.

Removing a Website from Google Search

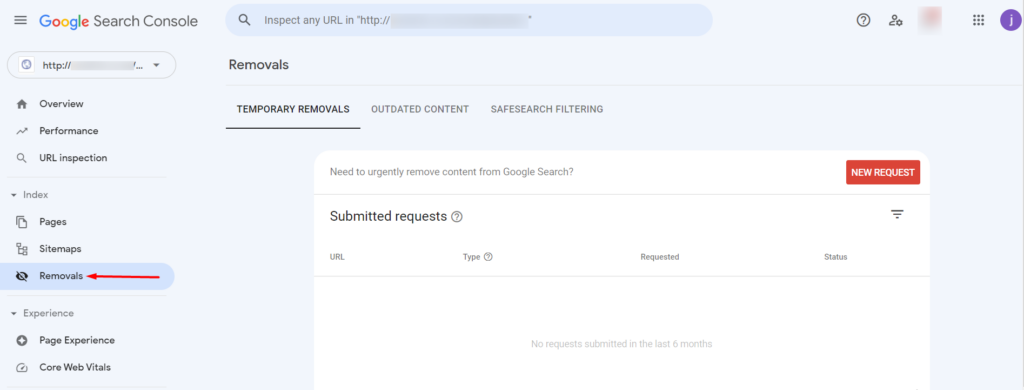

In a case whereby you already have your site indexed by Google and you would want to remove the site from the search engine, you can do so by carrying out the following:

i) Access the Google Search Console: https://search.google.com/search-console/

ii) Sign in using your account details. If you do not have an account you can create one from the same link above and add a property. Here is a guide on how to go about this: https://support.google.com/webmasters/answer/34592?hl=en

iii) On the top left section, select the property with the URL that you want to remove

iv) Click on the “Removals” section

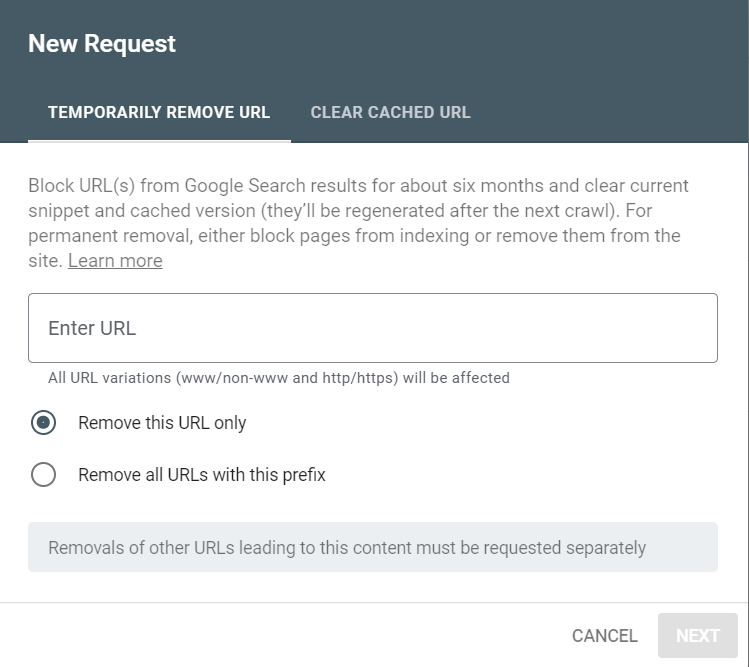

v) Within the “Temporary Removals” tab, click on the “New Request” button

vi) On the “Temporary Remove URL” tab within the popup rendered, you will have two options “Remove this URL only” and “Remove all URLs with this prefix”.

If you wish to remove only the specified URL for example a specific blog post, you will need to fill in its URL and select the option “Remove this URL only”.

On the other hand, if you would wish to remove the root domain and any other URLs associated with it, you will need to fill in your URL as the root domain and select the option “Remove all URLs with this prefix”.

vii) Click on the “Next” button to submit your request.

It is important to note that once this is done, Google will temporary remove your site from search engines for a temporary period. This is usually 6 months and thereafter your URL can be re-indexed once again, which is why it’s crucial to “Block Search Engines From Crawling And Indexing Your Website“.

Conclusion

There are many reasons why one could opt to discourage search engines from indexing their website. Within this article, we have looked into some of these reasons as well as some of the approaches that you can consider carrying out in order to discourage search engines from indexing your WordPress site.

Using the default WordPress search Engine visibility box method or modifying the robots.txt file methods may not be fully be effective to some search engines since some may still crawl for example your files or images. We highly recommend pairing these methods with password protection. This prevents search engines from accessing any of your site content.

We do hope that this article is helpful. Should you have any questions, comments or suggestions, please feel free to submit these from the comments section down below.

No Comments

Leave a comment Cancel